Future Tense explores the ways emerging technologies affect society, policy, and culture.

This article is part of Future Tense, a collaboration among Arizona State University, the New America Foundation, and Slate. 27, 2014: The author of this piece is serving as a technical witness in a patent lawsuit against Facebook. 16, 2013: This article was updated to clarify that it is the browser code, not Facebook, that reads whatever you type.ĭisclosure, Feb. 15, 2013: This article was updated to include the fact that Das and Kramer’s paper was published at the International Conference on Weblogs and Social Media in addition to being posted online. This feels dangerously close to “ ALL THAT HAPPENS MUST BE KNOWN,” a motto of the eponymous dystopian Internet company in Dave Eggers’ recent novel The Circle. Facebook monitors those unposted thoughts to better understand them, in order to build a system that minimizes this deliberate behavior.

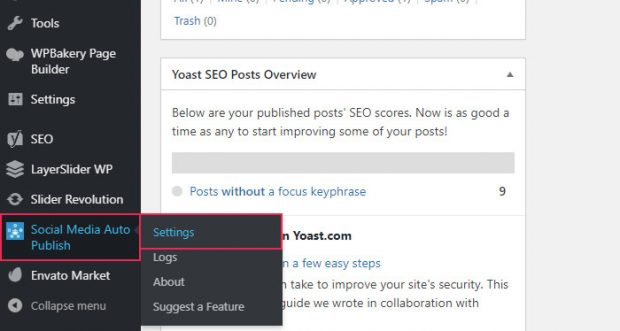

So Facebook considers your thoughtful discretion about what to post as bad, because it withholds value from Facebook and from other users. This goal-designing Facebook to decrease self-censorship-is explicit in the paper. Facebook studies this because the more its engineers understand about self-censorship, the more precisely they can fine-tune their system to minimize self-censorship’s prevalence.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed